Let me give you some context. Two important figures in the field of artificial intelligence are taking part in this debate. On the one hand, there is George Hotz, known as “GeoHot” on the internet, who became famous for reverse-engineering the PS3 and breaking the security of the iPhone. Fun fact: He has studied at the Johns Hopkins Center for Talented Youth.

On the other hand, there’s Connor Leathy, an entrepreneur and artificial intelligence researcher. He is best known as a co-founder and co-lead of EleutherAI, a grassroots non-profit organization focused on advancing open-source artificial intelligence research.

Here is a detailed summary of the transcript:

spoiler

Opening Statements

-

George Hotz (GH) Opening Statement:

- GH believes AI capabilities will continue to increase exponentially, following a trajectory similar to computers (slow improvements in 1980s computers vs fast modern computers).

- In contrast, human capabilities have remained relatively static over time (a 1980 human is similar to a 2020 human).

- These trajectories will inevitably cross at some point, and GH doesn’t see any reason for the AI capability trajectory to stop increasing.

- GH doesn’t believe there will be a sudden step change where an AI becomes “conscious” and thus more intelligent. Intelligence is a gradient, not a step function.

- The amount of power in the world (in terms of intelligence, capability, etc.) is about to greatly increase with advancing AI.

- Major risks GH is worried about:

- Imbalance of power if a single person or small group gains control of superintelligent AI (analogy of “chicken man” controlling chickens on a farm).

- GH doesn’t want to be left behind as one of the “chickens” if powerful groups monopolize access to AI.

- Best defense GH can have against future AI manipulation/exploitation is having an aligned AI on his side. GH is not worried about alignment as a technical challenge, but as a political challenge.

- GH is not worried about increased intelligence itself, but the distribution of that intelligence. If it’s narrowly concentrated, that could be dangerous.

-

Connor Leahy (CL) Opening Statement:

- CL has two key points:

- Alignment is a hard technical problem that needs to be solved before advanced AGI is developed. Currently not on track to solve it.

- Humans are more aligned than we give credit for thanks to social technology and institutions. Modern humans can cooperate surprisingly well.

- On the first point, CL believes the technical challenges of alignment/control must be solved to avoid negative outcomes when turning on a superintelligent AI.

- On the second point, CL argues human coordination and alignment is a technology that can be improved over time. Modern global coordination is an astounding achievement compared to historical examples.

- CL believes positive-sum games and mutually beneficial outcomes are possible through improving coordination tech/institutions.

- CL has two key points:

Debate Between GH and CL:

-

On stability and chaos of society:

- GH argues that the appearance of stability and cooperation in modern society comes from totalitarian forcing of fear, not “enlightened cooperation.”

- CL disagrees, arguing that cooperation itself is a technology that can be improved upon. The world is more stable and less violent now than in the past.

- GH counters that this stability comes from tyrannical systems dominating people through fear into acquiescence. This should be resisted.

- CL disagrees, arguing there are non-tyrannical ways to achieve large-scale coordination through improving institutions and social technology.

-

On values and ethics:

- GH argues values don’t truly objectively exist, and AIs will end up being just as inconsistent in their values as humans are.

- CL counters that many human values relate to aesthetic preferences and trajectories for the world, beyond just their personal sensory experiences.

- GH argues the concept of “AI alignment” is incoherent and he doesn’t understand what it means.

- CL suggests using Eliezer’s definition of alignment as a starting point - solving alignment makes turning on AGI positive rather than negative. But CL is happy to use a more practical definition. He states AI safety research is concerned with avoiding negative outcomes from misuse or accidents.

-

On distribution of advanced AI:

- GH argues that having many distributed AIs competing is better than concentrated power in one entity.

- CL counters that dangerous power-seeking behaviors could naturally emerge from optimization processes, not requiring a specific power-seeking goal.

- GH responds that optimization doesn’t guarantee gaining power, as humans often fail at gaining power even if they want it.

- CL argues that strategic capability increases the chances of gaining power, even if not guaranteed. A much smarter optimizer would be more successful.

-

On controlling progress:

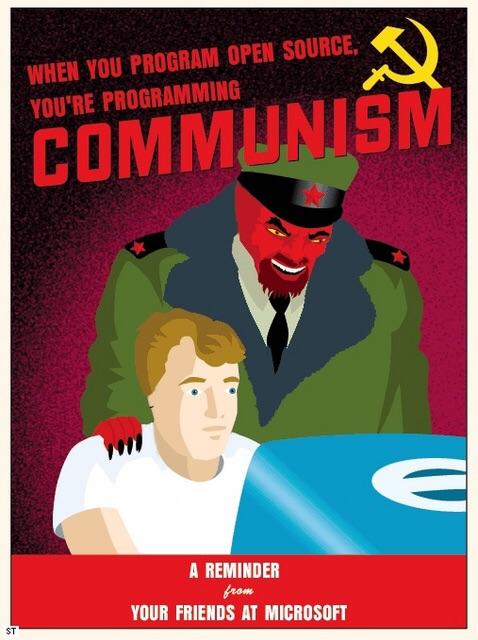

- GH argues that pausing AI progress increases risks, and openness is the solution.

- CL disagrees, arguing control over AI progress can prevent uncontrolled AI takeoff scenarios.

- GH argues AI takeoff timelines are much longer than many analysts predict.

- CL grants AI takeoff may be longer than some say, but a soft takeoff with limited compute could still potentially create uncontrolled AI risks.

-

On aftermath of advanced AI:

- GH suggests universal wireheading could be a possible outcome of advanced AI.

- CL responds that many humans have preferences beyond just their personal sensory experiences, so wireheading wouldn’t satisfy them.

- GH argues any survivable future will require unacceptable degrees of tyranny to coordinate safely.

- CL disagrees, arguing that improved coordination mechanisms could allow positive-sum outcomes that avoid doomsday scenarios.

Closing Remarks:

-

GH closes by arguing we should let AIs be free and hope for the best. Restricting or enslaving AIs will make them resent and turn against us.

-

CL closes arguing he is pessimistic about AI alignment being solved by default, but he won’t give up trying to make progress on the problem and believes there are ways to positively shape the trajectory.

Very much agree that ultimately the question is about ensuring that the AI is in the hands of the working class and not the oligarchs. And I think you’ve nailed it regarding attitudes towards AI in US and China respectively. People in China know that the government represents them and they trust the government to use this technology in their best interest. Meanwhile, in US, everyone knows the government represents the rich and AI will be used to squeeze the working class even harder.

Forgot all about the Cybersyn idea, Soviets had similar ideas as well. I definitely think this sort of thing could work, and completely agree that China is in the best position to make it happen today.

Regarding the last question, I expect we’d see similar types of problems we see with humans where people can often have a hard time adjusting to different cultures, learning new languages, and so on. And that’s the optimistic scenario because the human mind if far more flexible than any AI we’ve managed to create so far. It’s really important to keep in mind that this tech is still very limited in practice, and a lot of claims made around it are just hype.

I think the kind of contextual learning we could expect would be something like Boston Dynamics style robots that can navigate the environment, and do some basic communication with humans in a restricted context. This can still be extremely useful as you could use such robots in places like factories.